AI Study Reveals Flaws in Neanderthal Depictions, Urges Better Data Curation

February 21, 2026

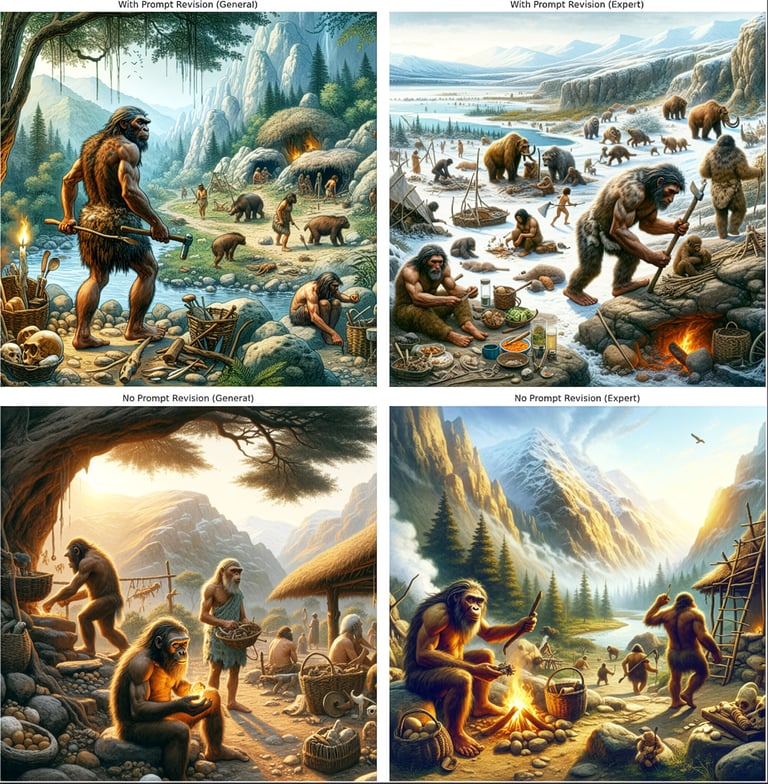

A study using ChatGPT and DALL-E 3 produced hundreds of Neanderthal texts and images from simple prompts, revealing persistent inaccuracies and biases in AI depictions of ancient humans even when accuracy is explicitly requested.

Researchers recommend better data sourcing, integrating reliable research databases, and re-running tests as AI models evolve to see if biases lessen or shift over time.

The team warns that AI outputs can shape public understanding and education about prehistory, underscoring the need for skepticism and source-checking when using AI for historical content.

Narratives tended to describe basic hunter-gatherer routines and echoed older mid-20th-century views, while visuals leaned toward late 1980s to early 1990s imagery, creating a mismatch between text and image content.

The outputs reflected older, outdated Neanderthal stereotypes, such as heavily muscled male figures with little emphasis on women, children, or family life, revealing gender and social bias in training data.

The study calls for better training data curation, real-time updates from scientific research, and stronger links between chatbots and current databases to reduce erroneous depictions.

AI-generated scenes included anachronistic objects (ladders, thatched roofs, woven baskets, glass vessels, metal tools) that clash with Neanderthal archaeology, distorting timelines and plausibility.

The research is published in Archaeological Practice and provides a template for evaluating gaps between scientific research and AI content, with implications for classrooms, museums, and public perception.

Summary based on 1 source

Get a daily email with more AI stories

Source

Earth.com • Feb 21, 2026

Study used AI to recreate the lives of Neanderthals, with laughable results