Sullivan & Cromwell Apologizes for AI-Generated Errors in Legal Filing, Promises Stronger Review Protocols

April 21, 2026

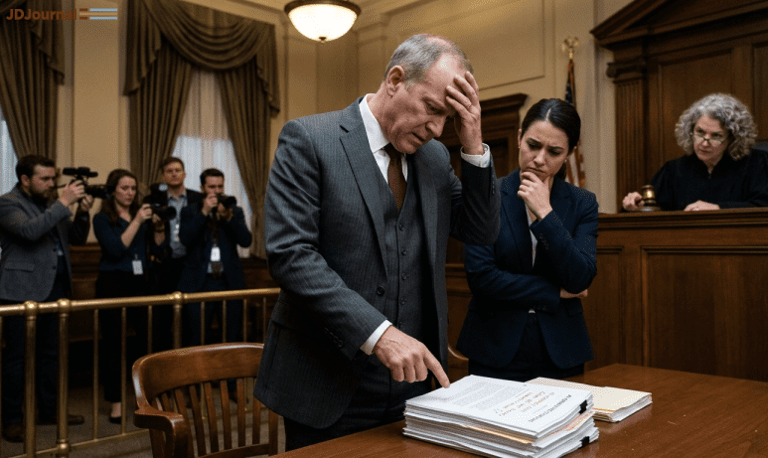

Sullivan & Cromwell apologized to a U.S. Bankruptcy Court chief judge for a filing that relied on inaccurate AI-generated citations and other errors, signaling accountability at the highest level.

Andrew Dietderich, co-chair of the firm’s restructuring practice, told the judge that the firm did not follow its review protocols and is considering strengthening training and internal review processes.

Two related notes: potential departures of S&C partners Jeffrey Wall and Morgan Ratner for Gibson Dunn, and Damien Charlotin’s tally of AI hallucinations surpassing 1,300 cases.

Dietderich stressed the firm’s comprehensive AI policies and safeguards, but acknowledged these measures failed to prevent hallucinations and citation inaccuracies.

The reporting references tools like BriefCatch’s RealityCheck and situates the incident within an ongoing debate over AI governance in legal practice.

AI hallucinations in legal work—fabricated citations, misquotations, and non-existent sources—have been rising since 2023, according to researchers tracking the trend.

The correspondence argues that AI in legal workflows requires meticulous human review, including line-by-line verification and printed checks.

The issue is framed as systemic across Am Law 100 firms, with calls for rigorous manual verification rather than overreliance on automated checks.

This incident reflects broader scrutiny of AI in legal research and drafting, where judges have sanctioned lawyers for AI-related inaccuracies, though AI use is not banned if accuracy is ensured.

Lawyers may use AI, but they have an ethical duty to ensure submission accuracy, with past sanctions serving as a reminder of that obligation.

The pattern of sanctions in AI-related cases underscores the need for thorough vetting of AI outputs in filings.

The piece compares this incident to previous AI failures and notes tools like RealityCheck as safeguards against hallucinations in briefs.

Summary based on 13 sources

Get a daily email with more Tech stories

Sources

Yahoo News • Apr 21, 2026

Sullivan & Cromwell law firm apologizes for AI 'hallucinations' in court filing

Yahoo News • Apr 21, 2026

Sullivan & Cromwell law firm apologizes for AI 'hallucinations' in court filing

Bloomberg Law • Apr 21, 2026

Sullivan & Cromwell Apologizes to Judge for AI Hallucinations

Reason.com • Apr 22, 2026

AI Hallucinations in Filing by a Top Law Firm