AI Chatbots in Healthcare: Revolutionizing Patient Interaction Amid Safety and Regulatory Concerns

March 2, 2026

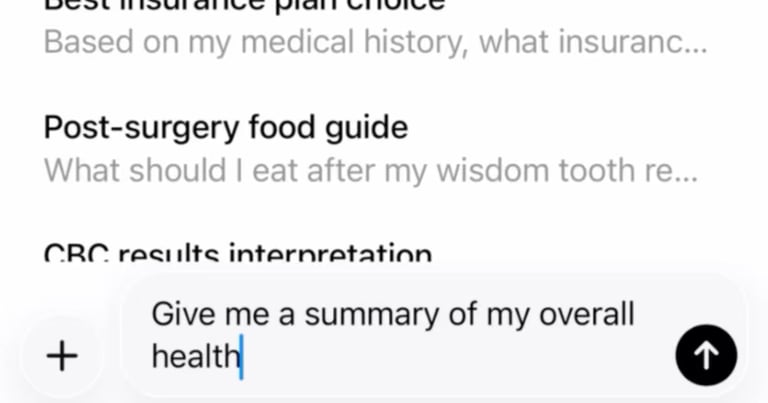

AI chatbots are being developed for health questions, with OpenAI's ChatGPT Health and Anthropic's Claude offering features that analyze medical records and wearable data, though they are not substitutes for professional care and must be used carefully.

Experts recommend a second-opinion approach by consulting multiple chatbots to compare recommendations and boost confidence in the information obtained.

Independent testing shows AI can do well on exams but struggles with real-time, nuanced human interactions, including gathering necessary information and distinguishing good versus bad guidance.

The Washington Times article notes the content is AI-assisted, based on AP reporting, and provides links to additional resources and the publication’s AI policy.

Regulatory and liability gaps are highlighted since ChatGPT Health is marketed as a consumer product rather than a regulated medical device, creating an oversight vacuum for data used in a large AI service.

A core risk is overconfidence in AI responses, as large language models can produce plausible yet incorrect or unsafe medical advice, which has led to real-world harmful decisions in some cases.

The expansion is driven by high demand, with hundreds of millions of weekly health-related queries, but raises questions about data access and ownership of medical data as a valuable asset for AI training and partnerships.

The introduction of ChatGPT Health could transform the patient-provider dynamic, with clinicians potentially spending more time correcting misunderstandings generated by a chatbot.

Overall, ChatGPT Health marks a significant moment in AI-health integration, offering convenience and personalization while raising concerns about data control, safety, ethics, regulation, and impact on traditional medical relationships.

OpenAI has launched evaluation tools like HealthBench to test safety and accuracy, but these are not guarantees of clinical safety and do not replace professional medical judgment.

Experts acknowledge potential value in personalized responses and aiding patients with limited access to care, while noting risks of inaccuracies and the need for cautious use, especially in emergencies.

Oxford University’s 2024 study found AI chatbots correctly identified conditions 95% of the time in written scenarios but failed to gather necessary information or distinguish quality of information in real interactions; latest models weren’t part of that study.

Summary based on 21 sources

Get a daily email with more World News stories

Sources

Yahoo News • Mar 2, 2026

What to know before asking an AI chatbot for health advice

AP News • Mar 2, 2026

Chatbots from ChatGPT and Claude offer health advice | AP News

PBS News • Mar 2, 2026

5 things you should consider before asking an AI chatbot for health advice

Economic Times • Mar 2, 2026

What to know before asking an AI chatbot for health advice