AI Enhances Medical Diagnostics, Outperforming Some Clinicians in Key Benchmarks

April 30, 2026

Current AI tools are not autonomous clinicians; they lack sensory input from physical exams and other modalities, underscoring the need for validation, equity, cost-effectiveness, safety, transparency, and ongoing monitoring.

Evaluation of o1-preview showed improvements across differential diagnosis, diagnostic test selection, and management reasoning, outperforming prior models and some human clinicians across multiple domains.

Key benchmarks showed 78.3% accuracy for including the correct diagnosis in the differential in NEJM conference cases, 52% first-diagnosis accuracy, and 97.9% accuracy when considering potentially helpful or close diagnoses.

The study used well-known benchmarks and case reports to assess the AI’s differential diagnosis and decision-making beyond real-world ED data.

An OpenAI reasoning model tested on emergency department cases matched or exceeded experienced physicians in diagnosing and managing care, with strong early-triage performance and ability to synthesize sparse data.

On a subset of NEJM cases, the AI selected the appropriate next diagnostic test 87.5% of the time, with high rates of helpful diagnoses, and it achieved near-perfect performance on a curriculum dataset, outperforming GPT-4 and some clinicians.

The model showed strong early-stage triage and the ability to convert unstructured data into plausible differential diagnoses and treatment steps.

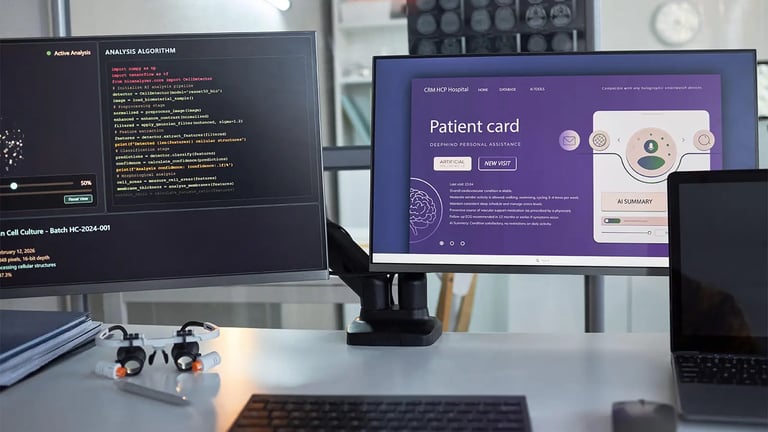

Potential applications include ER triage and serving as a second opinion, though limitations exist due to text-only input and the need to test with imaging and other data types.

Limitations include reliance on retrospective data and curated training sets; real-time performance in live patient care remains untested.

AI is not replacing doctors but augmenting care with better diagnostic support and decision-making, while demanding rigorous prospective trials and careful integration into clinical workflows.

Experts agree that rigorous prospective trials are essential to determine AI’s impact on clinical outcomes and to guide responsible adoption.

The study analyzed text data from electronic health records at three decision points in patient care, including triage and admission, and included real-world cases such as lupus with pulmonary embolism.

Summary based on 10 sources

Get a daily email with more Tech stories

Sources

Mashable • Apr 30, 2026

Study: AI can outperform doctors on diagnosing cases

Gizmodo • Apr 30, 2026

AI Just Beat Doctors at Diagnosing ER Patients. Don’t Get All Excited